Introduction

As we cross into mid-2026, the enterprise cloud landscape has hit a critical inflection point. The surge in Generative AI workloads now accounts for approximately 25% of total cloud spend, a shift that has stretched traditional infrastructure beyond its intended limits. At Xammer, we recognize that this era is defined by the “Inference Economics” challenge, a reality where the cost of running AI models often outpaces the value they generate unless the underlying infrastructure cloud computing layer is hyper-optimized. The industry is currently undergoing a fundamental shift from reactive monitoring to predictive governance. In the previous cloud era, IT teams responded to alerts after thresholds were breached. Today, within the Xammer framework, AI is no longer just a workload running on the cloud; it is the cognitive engine governing it. This move toward a “Self-Healing” cloud computing technology ensures that infrastructure is not just available, but surgically precise in its resource consumption. By transitioning to this intelligent model, organizations can move away from fragmented cloud it infrastructure and toward a unified, autonomous environment. This is the ultimate transformation: the cloud is no longer just where your business lives; through Xammer, it is how your business thinks, optimizes, and scales.

The Core Pillars of AI-Driven Performance

The foundation of a modern high-performance cloud rests on three integrated capabilities: predictive scaling, intelligent allocation, and automated remediation. Predictive scaling utilizes historical traffic trends to forecast demand, allowing the system to warm up resources before a surge actually occurs. This proactively eliminates the “cold-start” latency that often plagues traditional cloud based computing services. Furthermore, intelligent resource allocation has become a necessity as specialized silicon, such as GPUs, TPUs, and Neuromorphic chips, becomes the standard for 2026 workloads. AI agents now distinguish between training and inference tasks, routing them to the specific silicon that offers the best price-performance ratio. When combined with automated remediation, the result is a zero-waste cloud it infrastructure that maintains peak health autonomously

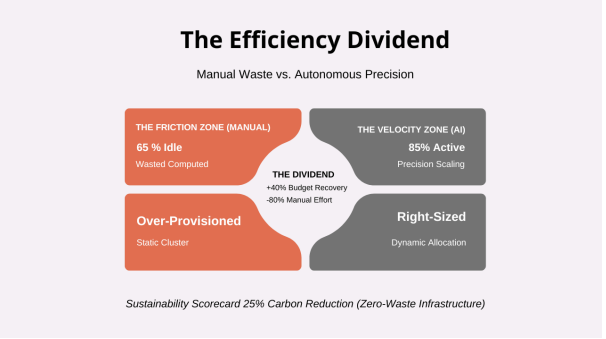

The Efficiency Dividend: Turning Manual Waste into Velocity

The financial impact of this transition is most visible when analyzing the gap between manual management and autonomous precision. In traditional environments, the “Friction Zone” is characterized by over-provisioned static clusters where up to 65% of compute capacity sits idle. By moving into the “Velocity Zone,” organizations realize an Efficiency Dividend. Through dynamic allocation, active utilization is pushed toward an 85% benchmark. This shift doesn’t just improve performance; it results in a roughly 40% budget recovery and a massive 80% reduction in the manual effort previously required for routine cloud computing it services.

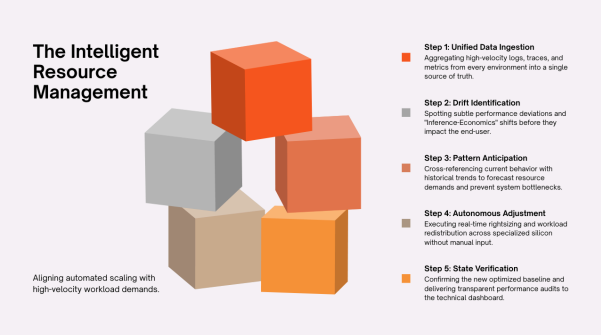

Orchestrating Intelligent Resource Management

Implementing this level of optimization requires a sophisticated lifecycle that moves beyond simple CPU metrics toward “Inference-aware” rightsizing. This process begins with unified data ingestion, where high-velocity logs from aws web services, Azure, and GCP are aggregated into a single source of truth. The most critical phase is autonomous adjustment, where the system executes real-time workload redistribution across specialized silicon. The cycle concludes with state verification, delivering transparent performance audits that outperform generic type of cloud computing services.

Overcoming the Complexity Crisis and the Skills Gap

Despite the clear performance gains, the path to implementation is often blocked by the “Complexity Crisis.” In 2026, roughly 98% of IT leaders report a significant gap in AI infrastructure expertise. While the tools for autonomous management are available, the strategic knowledge required to pilot these aws cloud technologies is in short supply. Success in this era requires centralizing telemetry and establishing clear “Human-in-the-loop” thresholds. By allowing AI to handle high-frequency autonomous actions, organizations can finally bridge the gap between their current “Cloud Bloat” and a future-ready, optimized state.

Conclusion

Looking ahead, the competitive advantage belongs to those who treat infrastructure as a dynamic asset rather than a static expense. With Sovereign Cloud spending reaching new heights, the next frontier is “Sovereignty-aware” performance, ensuring data stays governed with autonomous precision. The shift toward an AI-governed cloud typically yields a 30% to 40% reduction in OPEX. By starting with a Xammer AI audit to identify resource waste, your organization can begin capturing the AI Multiplier. Through the Xammer architectural standard, every dollar invested in infrastructure intelligence pays for itself many times over in efficiency and business acceleration.

FAQs

How does "Inference Economics" change my cloud budget?

In 2026, inference, the act of running live AI models, often accounts for the vast majority of AI-related costs. Strategic infrastructure cloud computing matches these specific, high-density tasks to the most cost-effective hardware, such as AWS Trainium3. This prevents the surge in AI usage from bankrupting your broader cloud it infrastructure budget.

What is the "AI Multiplier" in infrastructure?

Current 2026 evidence suggests that for every $1 spent on AI-driven cloud based computing services, organizations see roughly a $4.90 return in total productivity and business acceleration. This "multiplier effect" is driven by a massive reduction in manual work hours across your entire cloud computing technology stack.

How does AI handle the risk of Spot Instance interruptions?

Advanced AI orchestration tools use pattern anticipation to forecast exactly when cloud service providers might reclaim a spot instance. It then proactively migrates the workload to a new instance before the interruption occurs. This allows enterprises to utilize high-throughput compute for aws cloud computing tasks at a fraction of the cost of traditional, on-demand instances.

Why is centralized telemetry a prerequisite for AI success?

AI is only as good as its data. Without a unified, real-time view of every environment (aws cloud technologies, Azure, and GCP), an AI cannot identify the "drifts" or performance patterns necessary to execute autonomous adjustments. Centralizing telemetry is the only way to eliminate the "Fragmentation Tax" and ensure your cloud computing it services remain efficient.

Can this strategy coexist with data sovereignty requirements?

Yes. In 2026, leading type of cloud computing services are designed to be "Sovereignty-aware." These systems can optimize performance and resource allocation while strictly adhering to geographic data boundaries. This is a critical requirement for any global cloud computing service provider operating in highly regulated regions like Europe or Asia.